Infinite Stable Intelligence

Decentralized, Local AI

in a Single API

in a Single API

QVAC is Tether’s answer to centralized AI, an entirely new paradigm where intelligence runs privately, locally, and without permission on any device. The era of Stable Intelligence has begun.

A complete SDK for running AI models locally

Unstoppable AI

Eliminate central points of failure to ensure your world keeps thinking if the internet breaks. QVAC is built to be resilient, efficient, and completely decentralized.

Sovereign Mind

Intelligence is not a service to rent; it is a foundational element to be possessed. QVAC turns AI into a personal raw material embedded into your own reality.

Infinite Scale

Scaling intelligence to ten billion humans by pushing it to the edge. We are constrained only by the number of devices on Earth, not GPUs in a data center.

Physics-First

Centralized clouds are limited by the laws of physics and the speed of light. Local intelligence removes latency, ensuring thought becomes instant action.

Fabric LLM

A hardware-agnostic engine using Vulkan API to run on any GPU. It is the first framework to enable LLM fine-tuning directly on mobile devices.

Genesis Data

Massive synthetic datasets with 148 billion tokens for STEM and logic. We are leveling the playing field by providing the data to train independent models.

Local Ecosystem

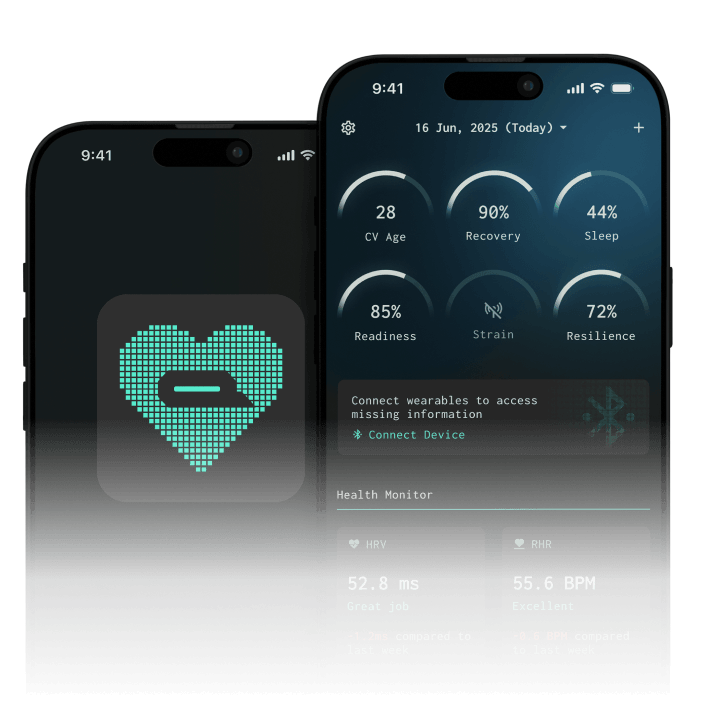

A complete suite of privacy-first tools including Health and Workbench. Every process, from biometric tracking to RAG, stays entirely on your device.

Machine Economy

QVAC AI agents act autonomously in the physical and digital world. Integrated WDK allows agents to transact using Bitcoin and USDt without intermediaries.

Simple, powerful API

With our tools you can power your apps with local AI for multiple use cases from the same interface: cross-platform, P2P native.

Run your code on Linux, macOS, Windows, Android, and iOS without changing a single line.

import { loadModel, completion, unloadModel } from "@qvac/sdk"; // Load model into memory const modelId = await loadModel( // P2P peer link, filepath to local model or model URL { modelType: "llm" }, ); // Use the loaded model const response = completion({ modelId, history: [ { role: "system", content: "You are QVAC by Tether, a human assistant" }, { role: "user", content: "What can I do in Paris on a weekend?" } ], stream: false, // or use kvCache for faster inference }); const text = await response.text; console.log(text); // You can use the loaded model multiple times // Unload model to free up system resources await unloadModel({ modelId });

Everything you need

to build with local AI

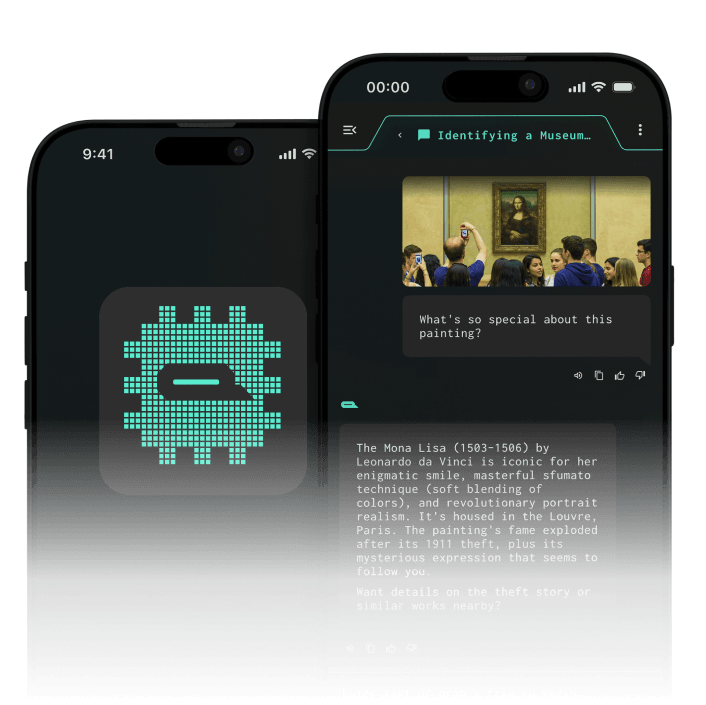

Local Intelligence in Action

Most AI apps trade your privacy for convenience. QVAC doesn’t. Our tools run locally and learn from your device, never the cloud. Whether it’s a heartbeat or a conversation, your data stays yours. Always private, always encrypted, always yours.